AI agents are a security nightmare. Moving the entire dev workflow to QEMU

AI agents are increasingly targeted by attackers. The Clinejection attack demonstrated this risk at scale. Attackers hid a prompt injection payload inside a GitHub issue. When developers used AI agents to process the issue, the payload hijacked the agents. This exploit allowed the attackers to install hidden software on 4,000 developer machines.

This incident shows why running AI agents without a sandbox is dangerous. A

hijacked agent operates with the user’s local permissions. It gains immediate

access to plaintext credentials. If a prompt injection occurs, the agent can

silently read .aws tokens (which are stored as plain text), .ssh keys, API

tokens, and environment variables.

Without sandboxing, these AI agents expose critical infrastructure to external

control.

Many sandboxing technologies have seen heavy use in recent years due to the explosion of AI agents, making the need for isolation critical. Firecracker, gVisor, Kata Containers, Docker sandbox, and many other technologies address this.

However, none of these adapted easily to my development setup. There are many reasons for this, primarily:

Immature Security: Modern sandboxing technologies like Docker Sandbox or Firecracker rely on complex shim layers and specific kernel features that are still evolving.

Agentic Execution Risk: AI agents like Claude Code and Gemini CLI operate as “black boxes” with autonomous terminal access. Their heavy reliance on the Node.js and Python ecosystems introduces massive dependency trees and supply-chain vulnerabilities. A single prompt injection could allow an agent to silently read keys, passowrds, and environment variables.

Loss of Data Sovereignty: Most industry-standard sandboxes are cloud-native or API-driven, requiring source code and context to be uploaded to third-party servers.

Going back to the Docker issue:

Two years ago, I wrote an article about my issues with Docker. I started using an isolated QEMU VM, installed Docker inside it, and removed Docker from the host system. This prompted moving all repositories and code to that VM. Two years later, this setup still works perfectly for most Rust projects.

In contrast to newer sandboxing tools, QEMU is a mature, battle-tested hypervisor with a well-defined security boundary that has been audited over decades. A local QEMU setup ensures that all data remains on local disk and allows for a high-performance workflow that functions entirely offline.

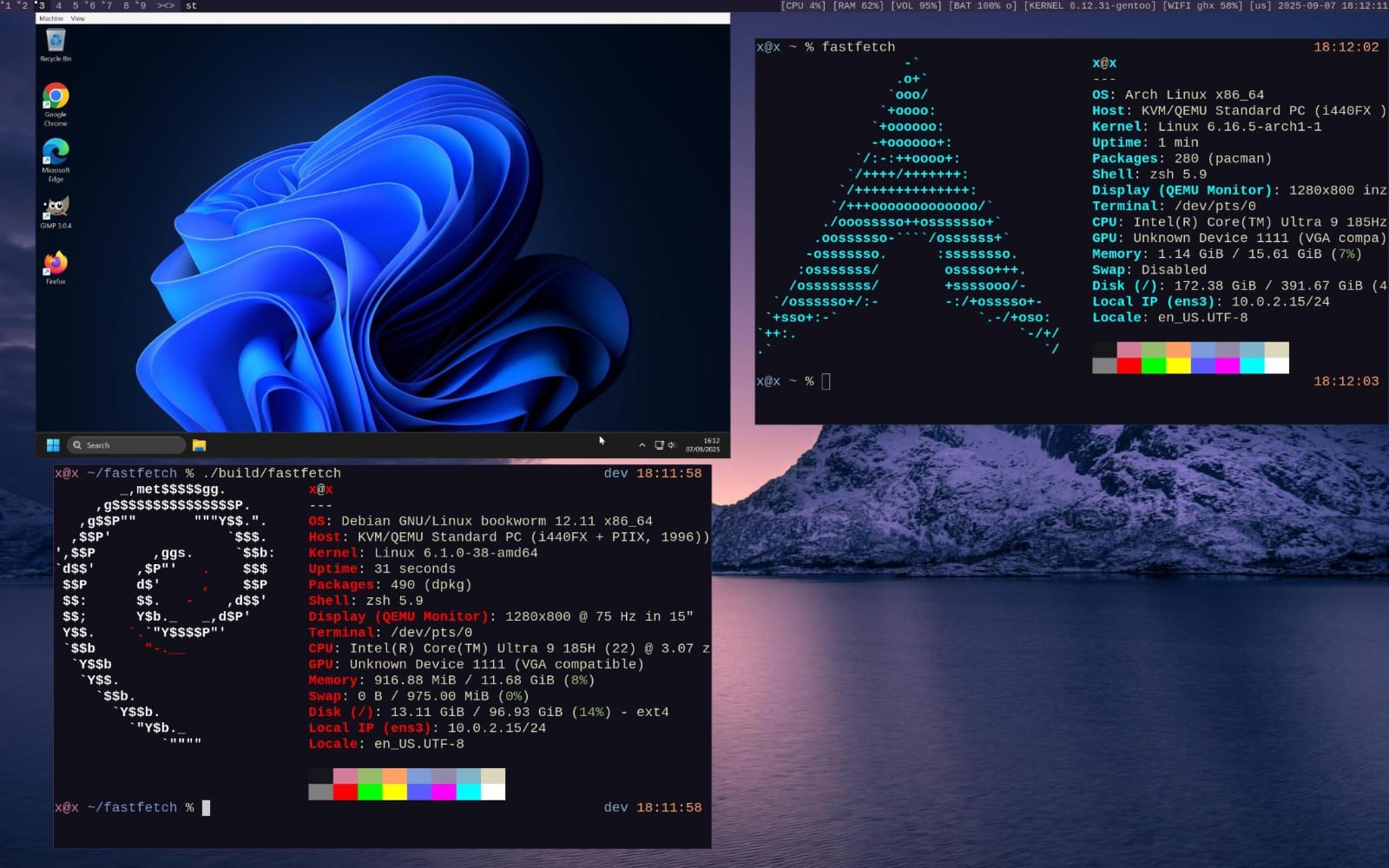

The host system runs Gentoo Linux. Controlling compile flags and dependencies keeps the system minimal, resulting in fewer packages and a smaller attack surface. Tools like Docker, Node.js, Claude, and Gemini are removed from the host.

Recently, the workspace was divided into five separate VMs instead of using other sandboxing technologies. These mostly run Arch Linux for its rich package repositories. Current VMs include:

Personal: For private development and experimental projects.

Work: For my full-time job.

Credentials: Secure storage for keys and passwords. No AI, Docker, or Node.js allowed.

Tor: An anonymous workspace where all outbound traffic is hard-routed

through the Tor network using iptables.

Windows: Windows vm, used mainly for PowerBI.

The virtual machines are managed with a custom shell script,

vms. It hides the

complexity of QEMU flags by organizing configurations and images into separate

directories. The daily workflow is simple. Booting a VM and connecting over ssh

using unique keys configured in ~/.ssh/config. Work is done entirely via ssh

using nvim, relying on sshfs when host system file access is required. All

tools, including Claude, run inside this virtual machine. Nothing escapes the

VM. The setup is highly performant thanks to QEMU, it easily handles heavy tasks like

compiling a big Rust workspace.